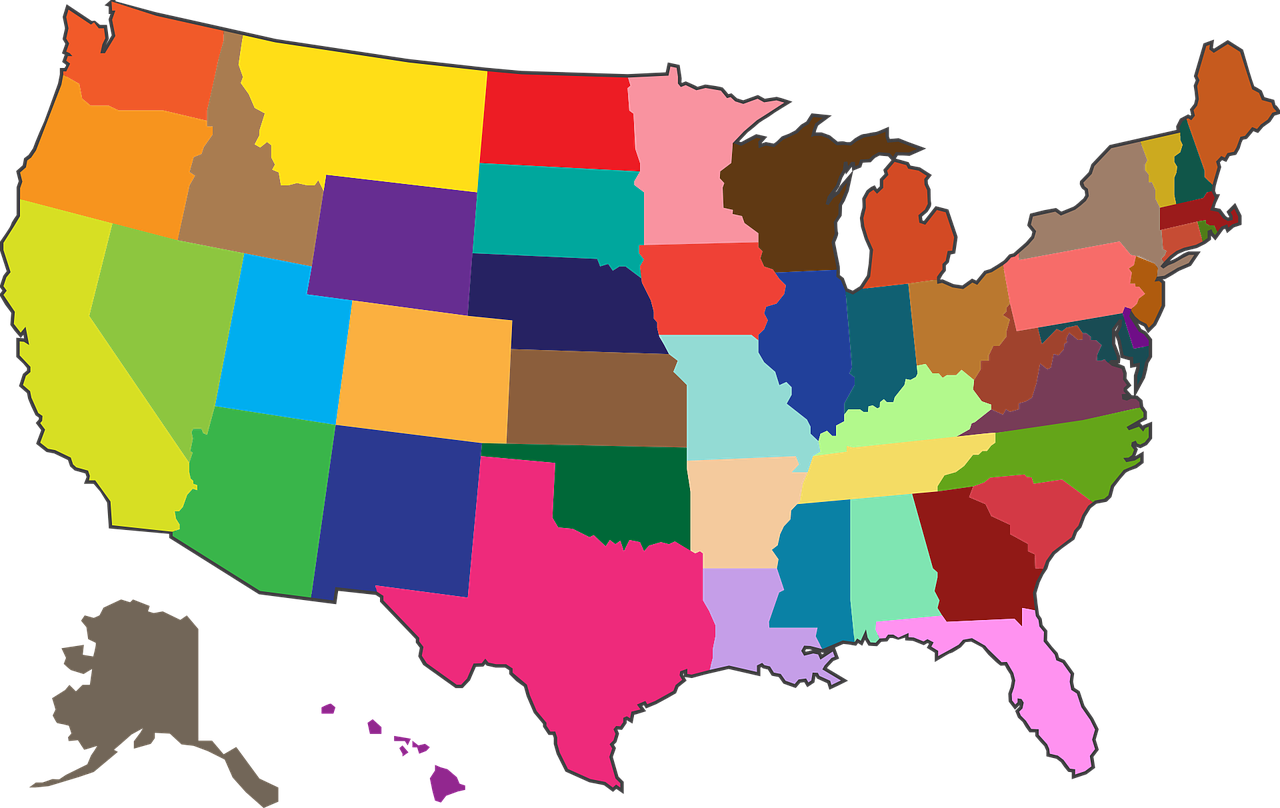

Why Is America Called The United States?

The United States of America is the name given to the confederation of colonies that formed the country as early as 1775. Since the war of independence, these colonies have been looking to form a new country and a new identity, without the British Empire. Benjamin Franklin is credited with the name of The United … Read more